International Conflict Is Just One Render Away02.08.2019

A mid-range computer with a custom piece of software downloaded from the Web for free. A couple of hundred pictures, one video, and forty minutes of free time. That’s all it takes for anyone to compile a video of Donald Trump presenting incontrovertible evidence that Russia has indeed meddled with the 2016 presidential elections in the US, leaving him no choice but to withdraw from upcoming talks with Putin. Welcome to deepfaked reality.

Although it may sound like a passage from a Philip K. Dick novel, it’s far from fiction. The roots of the current deepfake wave dates back to Looksery, a piece of software developed over 2014 and 2015, and made popular by social media and Kickstarter. This seemingly harmless and simple application was capable of modifying facial expression in real-time for entertainment purposes—a forerunner, so to speak, of the variety of filters that help us pass the time on Facebook and Instagram now. To some extent, deepfake software can be considered a much more advanced successor to that particular technology, based on AI algorithms which use machine learning mechanisms (so-called GAN networks) to generate fake pictures or video footage that have no actual basis in reality.

Both the term and the technology emerged in a scandal that broke in late 2017, after a Reddit user began posting deepfakes of celebrities’ pasted into porn. Victims of the practice included Emma Watson, Natalie Portman, Michelle Obama, Ivanka Trump, Kate Middleton, and Gal Gadot. Because the technology is widely available, with basically anyone being free to download the software, feed it pictures or footage, and make their own fake video. Rendering hyperrealistic faces is one thing. But you can also find software that generates realistic audio feeds, which can be used to put whatever words we may wish into basically anyone’s mouth. All you have to do is feed the app a large enough pool of a given person’s voice samples and in return you get a perfect voice recording, imitating that person’s speech patterns, tone, and accent.

Mind-Bending Examples

The more deepfakes you see on YouTube, the more you realize that the technology is really well polished—sometimes, it’s genuinely hard to differentiate between deepfakes and untouched footage (by the way: see how you stack up by doing the test).

Deepfakes have yet to cause a global incident with major international security implications, although the technology carries much inherent potential for one

Outside of the more prurient nooks of the Internet, deepfakes began making waves in 2018, when Nicolas Cage was repeatedly pasted into movies he didn’t star in. The Cage fakes were put together with Adobe’s After Effects and FakeApp, the same free piece of software that was used in 2018 to insert the face of young Carrie Fisher into the Princess Leia scene in Rogue One, producing a striking resemblance that Industrial Light & Magic, the studio responsible for the film’s VFX content, spent hundreds of thousands of dollars to achieve:

Deepfakes have yet to cause a global incident with major international security implications, although the technology carries much inherent potential for one, as evidenced by a recent video showing faux Mark Zuckerberg being surprisingly open and honest about harvesting and using the most intimate data from billions of people to manipulate them down the line.

The Uncanny Valley

The relationship between deepfakes and the so-called uncanny valley is particularly interesting. An aesthetics concept, the uncanny valley describes the moment in which a hyperrealistic-looking robot, animation, or any other object, bear such a resemblance to humans that it begins to elicit discomfort in the viewer, sometimes even repulsion.

“The definition of the uncanny valley has always been quite broad and extended beyond physical robots to include bots and computer-rendered faces. GAN networks are capable of rendering objects with such precision and in such an abstract manner, that the end result tends to produce revulsion or a peculiar uncanniness in people. The strange sensation is produced not only by robots, but also digitally-rendered faces fitted with voice networks, which may eventually lead people to fear such interactions,” claims Aleksandra Przegalińska, philosopher and academic scholar, whose findings in the matter were compiled into her January 2018 paper In The Shades of the Uncanny Valley: An Experimental Study of Human-Chatbot Interaction.

AI In Our Everyday Lives

Everyone disconcerted with the vision of AI algorithms becoming powerful enough to faithfully render human faces should take a good look around—as similar algorithms are ever more present in our daily lives, which, in turn, gradually accustoms us to their presence. It should come as no surprise, therefore, that AI has found its way into nearly all aspects of our modern world, from search engines we use, through apps, all the way up to self-service grocery stores that have been gaining popularity across the globe. Przegalińska takes the notion further, and claims that AI algorithms are not just increasingly prevalent, but basically ubiquitous.

Those who are bewildered by those developments apparently slept through the early stages of a quite revolution which continues to unfold before our very eyes—on nearly all possible fronts.

“Every Netflix user has daily contact with AI. The streaming platform uses algorithms belonging to the family of so-called automatic clustering algorithms. This clustering is responsible for recommending specific titles based on what we’ve already seen or what people similar to us have seen. Rankers are also ubiquitous—across stores like Amazon, for example, which has its own personalized recommendation system. Banks, on the other hand, profile their clients using simple regressions, more advanced neural networks, and deep learning networks. The phones many of us carry in our pockets are often fitted with affective computing systems (which handle methods and instruments used to analyze, interpret, and simulate emotional states—ed. note), another example of AI. So are the utilities that academics use to screen for plagiarism. Examples abound.

Those who are bewildered by those developments apparently slept through the early stages of a quite revolution which continues to unfold before our very eyes—on nearly all possible fronts. Just take a look at one of the latest College Humor efforts—in the wake of the bitterly received Game of Thrones finale, the CH crew decided to task an AI to rewrite the last episodes of the hit series. The result was pretty comical, but that probably the intention of the absurdist humor-oriented website. But that does not change the fact that AI is nowadays ubiquitous enough to be used in areas where we would never expect it.

Benefits, Drawbacks, and Threats

All things aside, the emergence of deepfakes will definitely trigger the era of mass distrust of the veracity of video footage and will prompt effort to redefine journalistic integrity. After all, the technology can easily be used to manufacture fake news en masse and that is not the only unethical application that quickly springs to mind. “The danger is clear—networks like these can be used to mislead people by generating pictures and footage that look deceptively real, but illustrate events that never took place. The good news, however, is that we’ve already developed Ais which can identify deepfakes with considerable accuracy. You can already install software to shield yourself from being tricked by fake media,” Przegalińska warns. “Targets of deepfaking efforts include politicians first and foremost, as the Web is brimming with pictures and video of political figures, which makes compiling a fake video starring such people relatively simple. The terrifying this is that some of the data used by networks employed by AI algorithms simply cannot be reached,” she adds.

All things aside, the emergence of deepfakes will definitely trigger the era of mass distrust of the veracity of video footage and will prompt effort to redefine journalistic integrity.

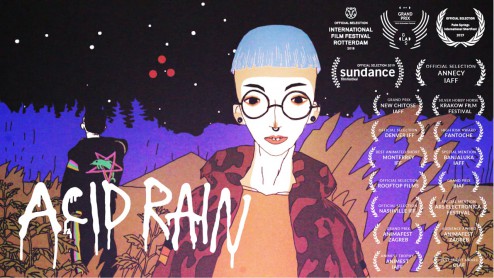

Well, are there any benefits to deep fake algorithms being widely available? Neural networks used for rendering can be of great use in fields like art. They’re also highly interesting, from a cognitive standpoint, to graphical artists and may serve as a source of inspiration. “From a cultural angle, this sort of things may have artistic value and may be be seen as beautiful—consider the animations featured in Janek Simon’s exhibition Synthetic Folklore from earlier this year,” Przegalińska argues. The technology can also be a boon for the broadly defined entertainment industry. Pricey and lengthy reshoots, which take place after screenplay rewrites or major concept changes. In an interview with Inverse, Ben Morris, a VFX specialist who worked on the Star Wars: The Last Jedi, revealed that most of the Star Wars saga cast has already been digitally scanned, because you never know when such a “clone” might come in handy.

And if we were to berate ourselves, somewhere down the line, for letting the technology develop in such a sinister and uncontrolled direction, let’s just remember that we were warned, and quite some time ago, too—one only has to think back to “The Waldo Moment” episode from Black Mirror’s second season. One of the user reviews for the episode, posted on IMDb, bears the headline: “It can happen. Perhaps it’s happening.” And we’d be hard-pressed to find a better illustration of the technology and its impact on our everyday life.

see also

- Jerzy Dytkiewicz: You need space

Papaya Films

Papaya FilmsPeople

Jerzy Dytkiewicz: You need space

- A Woman Who Can’t Feel Pain. The Mutation in Her DNA May Help Treat Chronic Pain

News

A Woman Who Can’t Feel Pain. The Mutation in Her DNA May Help Treat Chronic Pain

- Rap in Therapy. Why Did Hip-Hop Start Openly Talking About Mental Health

Trends

Rap in Therapy. Why Did Hip-Hop Start Openly Talking About Mental Health

- Tomasz Knittel: I’m Taking the Audience on a Journey

Papaya Films

Papaya FilmsPeople

Tomasz Knittel: I’m Taking the Audience on a Journey

discover playlists

-

Walker Dialogues and Film Retrospectives: The First Thirty Years

12

12Walker Dialogues and Film Retrospectives: The First Thirty Years

-

Animacje krótkometrażowe ubiegające się o Oscara

28

28Animacje krótkometrażowe ubiegające się o Oscara

-

05

05 -

Seria archiwalnych koncertów Metalliki

07

07Seria archiwalnych koncertów Metalliki