AI-Powered Text Generator Too Dangerous to Release15.02.2019

OpenAI, an AI research organization supported by tech mavens such as Elon Musk or LinkedIn founder Reid Hoffman, has refused to release the results of its recent AI study. The researchers worry that the system they developed could potentially wreak havoc if it were to fall into the wrong hands.

At its core, GPT2 is a text generator. The AI system that powers it uses a sizable sample of internet content to predict what the next word in a given sentence should be. The Guardian reports that when task with generating new text, the GPT produces highly plausible output, in both style and subject. It also seems free of the bugs that plagued similar systems in the past—it no longer changes the subject in the middle of a sentence, doesn’t mangle the syntax in longer sentences, and doesn’t forget what is was writing about.

GPT2 has been tested by staffers from The Guardian, who fed him the opening line of Orwell’s 1984, and Wired, which had GPT2 write text off of the phrase “Hillary Clinton and George Soros.” In the former case, the AI spit out a futuristic novel, while in the latter case, the generator produced a political screed rife with conspiracy theories and fake accusations.

The sheer size of GPT2 database is impressive. OpenAI’s research director, Dario Amodei, claims that the system is twelve times bigger and has a fifteen times larger database thatn the AI model that has heretofore been considered the most advanced in the world. GPT2 has been trained on a sample containing around ten millions articles and posts selected by trawling links posted on Reddit. The sample itself was forty gigabytes (which equals about thirty-five thousand copies of Moby Dick).

GPT2 was not, however, created for the purpose of creating convincing “deepfakes for text.” The software was designed to perform a variety of tasks, including translation, compiling summaries, or drawing conclusions, and was often much faster and more effective in handling them than AI system built expressly with these particular purposes in mind.

However, the quality of the text produced by GPT2 was so high, that the researchers who created it grew concerned and ultimately decided against releasing the system to the general public for the time being, in fear of the potential consequences of its abuse.

“We need to perform experimentation to find out what they can and can’t do,” said Jack Clark of OpenAI. “If you can’t anticipate all the abilities of a model, you have to prod it to see what it can do. There are many more people than us who are better at thinking what it can do maliciously.”

see also

- See Wes Anderson’s "Grand Budapest Hotel" Storyboards

News

See Wes Anderson’s "Grand Budapest Hotel" Storyboards

- More Than Just a New Name. What Changes Can Contestants Expect from This Year’s Papaya Young Creators?

Papaya Young Directors

Papaya Young DirectorsNews

More Than Just a New Name. What Changes Can Contestants Expect from This Year’s Papaya Young Creators?

- Coldplay Won’t Tour Until It Becomes Sustainable

News

Coldplay Won’t Tour Until It Becomes Sustainable

- Insect Diet. EU Food Safety Agency Approves Manufacture of Food with Yellow Mealworm

News

Insect Diet. EU Food Safety Agency Approves Manufacture of Food with Yellow Mealworm

discover playlists

-

John Peel Sessions

17

17John Peel Sessions

-

Cotygodniowy przegląd teledysków

73

73Cotygodniowy przegląd teledysków

-

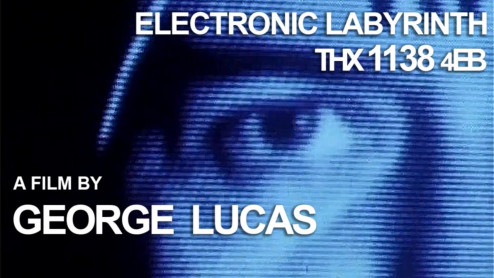

George Lucas

02

02George Lucas

-

CLIPS

02

02CLIPS